Mythos did something unusual when it launched. It hard-coded which AI model could be used for which task category inside its platform. Customer-facing responses could only use smaller, lower-risk models. Financial analysis required human review before output was released. The model choice wasn’t a settings menu. It was in the architecture.

Most organisations building on AI haven’t made that choice. They’ve added AI to existing systems without stopping to ask what decisions that AI is now authorised to make, what should happen when it flags a problem, whether anyone will notice if it starts behaving differently and how much damage it can do before someone pulls the cord.

Those four questions have to be answered before you go live. The discipline to answer them already exists. It’s been running reliably in other industries for decades.

Decision boundaries

Every AI system makes decisions. The practical question is which ones it’s allowed to make alone, which need a human in the loop and which it isn’t permitted to make at all. Without that answer built in, the system acts at the limits of what’s technically possible, not the limits of what’s organisationally appropriate.

This isn’t a policy problem. A document that describes what the system should do isn’t a constraint. The system can’t read it during an incident. The boundary has to be structural: at this point the system acts, at this point it waits, at this point it cannot proceed regardless of the instruction it receives.

Tooling like NemoClaw exists specifically to encode these limits at the infrastructure layer, defining which tools an agent can invoke, which commands are restricted and what it cannot do regardless of what it’s told.

Escalation layers

A system that can detect a problem and a system that can do something about it are not the same thing. Detection is a capability. The path from detection to response is an architectural decision.

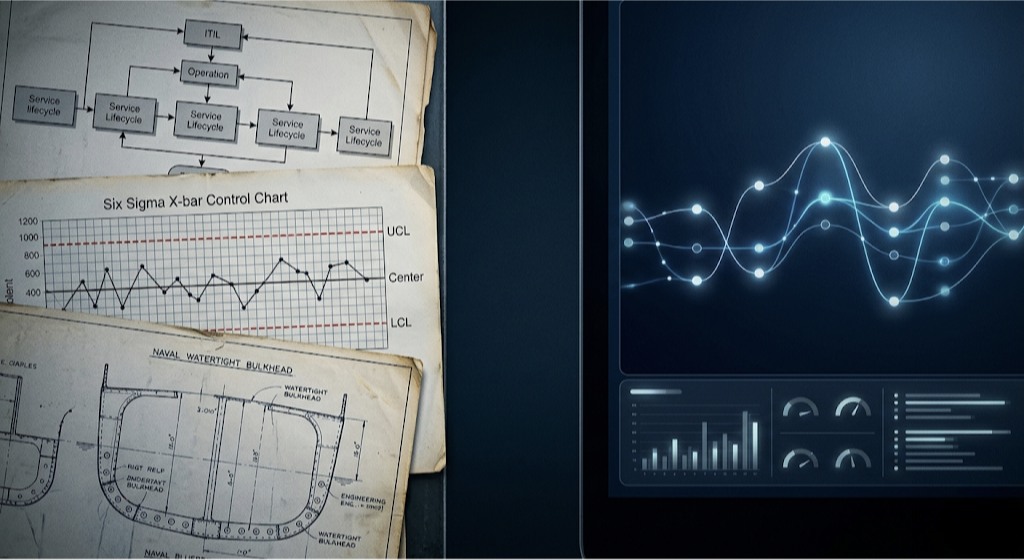

IT operations solved this a long time ago. ITIL incident management codifies exactly this: a defined ladder from detection to response, with owners at each rung, time limits before automatic escalation and a clear answer to what happens when nobody acts. A P1 incident gets paged within 15 minutes. If nobody responds, it escalates. The path isn’t decided in the moment. It’s designed in advance and tested before anything goes live. This has been standard practice in well-run operations teams for decades.

The question is why it didn’t transfer.

A lawsuit was filed earlier this year against OpenAI by a stalking victim whose abuser had used ChatGPT over an extended period. The victim reported the threat to OpenAI three times. OpenAI’s own systems generated an internal flag classifying his account activity as involving mass-casualty weapons. Neither reached anything with the authority to act. The user was arrested on four felony counts. The signals were there. Nobody had built what came next.

The same organisation that would never ship a production database without an incident management framework shipped a system that could identify serious harm with no equivalent. The ITIL question was never asked.

Before any system that can flag goes live, the ladder needs to exist: who receives it, what they’re expected to do, how long before automatic escalation and what the top rung looks like. Then test it with a simulated flag before anything is real.

Operational telemetry

You cannot govern a system you cannot see. Most production AI deployments have logs. Logs are archaeology. By the time a pattern surfaces, the failure has been happening long enough to matter.

Manufacturing quality control solved this in the 1920s. Statistical Process Control, the discipline that underpins Six Sigma, starts from a single insight: post-production inspection fails. By the time you find defects in finished output, the process has already produced a run of bad work. The answer is to define control limits before the process starts, monitor continuously while it runs and treat deviation from those limits as a signal requiring investigation, not a surprise requiring explanation. You set the baseline first. Then you watch against it.

Gartner’s survey of 782 infrastructure and operations leaders found that just 28% of AI use cases fully succeed and meet ROI expectations. The most common reason wasn’t model quality. It was absent monitoring: systems deployed and left to run, where nobody noticed the drift until it was expensive. The visibility layer is what makes governance possible in real time rather than in postmortems.

Defining expected behaviour before deployment is where to start, because you cannot measure deviation from a baseline you never set. LLM-as-a-judge provides the measurement instrument: a second model that continuously samples outputs against a defined quality standard and flags when scores drift outside the range you set. From there: a dashboard someone looks at regularly, not just an alert waiting to fire. And a standing weekly review, because the trend matters as much as the threshold.

Failure containment

Something will fail. The useful question isn’t how to prevent it. It’s how much damage it can cause before it stops, and whether that number was designed or discovered.

Naval architecture codified this centuries ago. Ships are divided into watertight compartments precisely because flooding is assumed, not prevented. One compartment floods, the ship stays afloat. The design question isn’t whether water gets in. It’s how far it travels before it stops. The Titanic had bulkheads. They didn’t reach the top deck. Water overtopped them and moved forward, compartment by compartment. The containment looked right. Under the conditions that actually occurred, it wasn’t truly isolated.

ChipSoft, a healthcare IT provider running systems across multiple European hospitals, was hit by ransomware in 2024. The attack entered through a connected vendor system. Because the hospital networks were tightly integrated, the ransomware propagated across segments before the original breach was isolated. Several hospitals switched to pen and paper during recovery. The bulkheads weren’t high enough.

Blast radius is a design constraint, not an incident metric. It means isolation by default, with integration as a deliberate choice rather than a starting assumption. It means defining the minimum viable operating state before anything breaks, so recovery doesn’t require full system health before partial function is restored. And it means asking in design, not in retrospect: if this component fails, how far does it travel before it stops?

Closing

The pattern across all four sections is the same. ITIL has defined escalation paths for IT operations since the 1980s. Statistical Process Control has been monitoring industrial processes against baselines since the 1920s. Naval architects have been designing for blast radius since ships first had compartments worth protecting. These disciplines work. They’re proven. They’re available.

None of it transferred when AI arrived.

The governance conversation in most organisations is still about the AI. Which model, which vendor, which use case. The architecture around it gets less attention. That’s where the exposures live, and the tools to address them are sitting in plain sight.

Four questions. What is this system authorised to decide? What happens when it flags a problem? Is anyone watching in real time? How much damage can it cause before it stops?

Sources

- Gartner, April 2026: AI Projects in Infrastructure and Operations Stall Ahead of Meaningful ROI Returns

- TechCrunch, April 2026: Stalking victim sues OpenAI, claims ChatGPT fueled her abuser’s delusions and ignored her warnings

- Bloomberg Law: OpenAI Accused of Pushing Stalker’s Delusion Through ChatGPT

- NVIDIA: NemoClaw — Deploy Safer AI Assistants with OpenClaw Safety Guardrails

Leave a Reply