There is a project on GitHub called OpenClaw. It appeared in November last year. By March it had more stars than any open-source project in history. It connects to your email, your calendar, your files and your messaging apps, and it acts on your behalf around the clock.

One user set it up to manage his inbox while he slept. Another used it to build a full web application while she grabbed a coffee. A third watched it pick a fight with his insurance company, and win a claim reinvestigation.

OpenClaw is not enterprise software. It has no governance framework, no audit trail and a security audit found over 500 vulnerabilities. It is, by any reasonable measure, not ready for organisations to depend on.

And yet it is everywhere. Because what it demonstrates, AI that acts, decides and commits on your behalf, is not going away. Enterprise versions of this capability are being built right now by Nvidia, IBM and every major platform vendor. The question is not whether your organisation will have AI that behaves like this. It is whether you will be structurally ready when it arrives.

Most organisations are not. Not because they are bad at AI, but because they have never had to think about what it means for a system to act on their behalf.

This is where a maturity model becomes useful.

The moment everything changes

Most organisations start with AI as a tool. It drafts, summarises, suggests. Humans decide and act. That arrangement is comfortable because responsibility stays obvious.

The trouble starts when AI begins to do things.

Trigger a workflow. Send a response. Issue a refund. Escalate an incident. At that point the system is no longer a tool. It is behaving like an employee. And employees require something tools do not: a clear answer to the question of who is responsible for what they do.

That question is easy to ignore when AI is only suggesting. It becomes impossible to ignore when AI is acting.

The CEO of Global Mofy, a company that recently deployed AI agents across its content production pipeline, described the shift as AI “evolving from ‘Chat’ to ‘Work’.” That is the right frame. And most organisations hit it without being ready for it.

Why teams stall — and what is actually happening

Most organisations stall at the same point: AI is producing real value as an assistant, but the leap to letting it act feels unsafe.

That hesitation is usually right. Not because the technology is unready, but because the organisation is not.

When I review teams stuck at this transition, the gap is almost always the same. Responsibility is implicit. Escalation is informal. Nobody has defined what an acceptable failure looks like, who can stop the system, or who owns the outcome if something goes wrong.

Without making those things explicit, autonomy feels unsafe, because it is unsafe.

There is also something more subtle happening. When AI is only assisting, organisations can still pretend that responsibility has not moved. Humans are the visible decision-makers, even if AI is shaping every decision. The moment AI begins to act, that pretence ends. The system commits, and responsibility becomes observable.

This is when unresolved questions surface that should have been answered much earlier:

Who is allowed to say yes on behalf of the company? What level of error is acceptable without human review? Who owns the consequences of a fast, automated decision? How do we stop the system if it behaves badly?

If there are no clear answers, the organisation cannot safely move forward. What looks like reluctance about AI is usually reluctance about these questions.

The maturity model helps because it makes the stall diagnosable. It turns vague discomfort into a specific design gap.

The AI Employee Maturity Model

This model is not about what AI can do. It is about what your organisation has designed it to own.

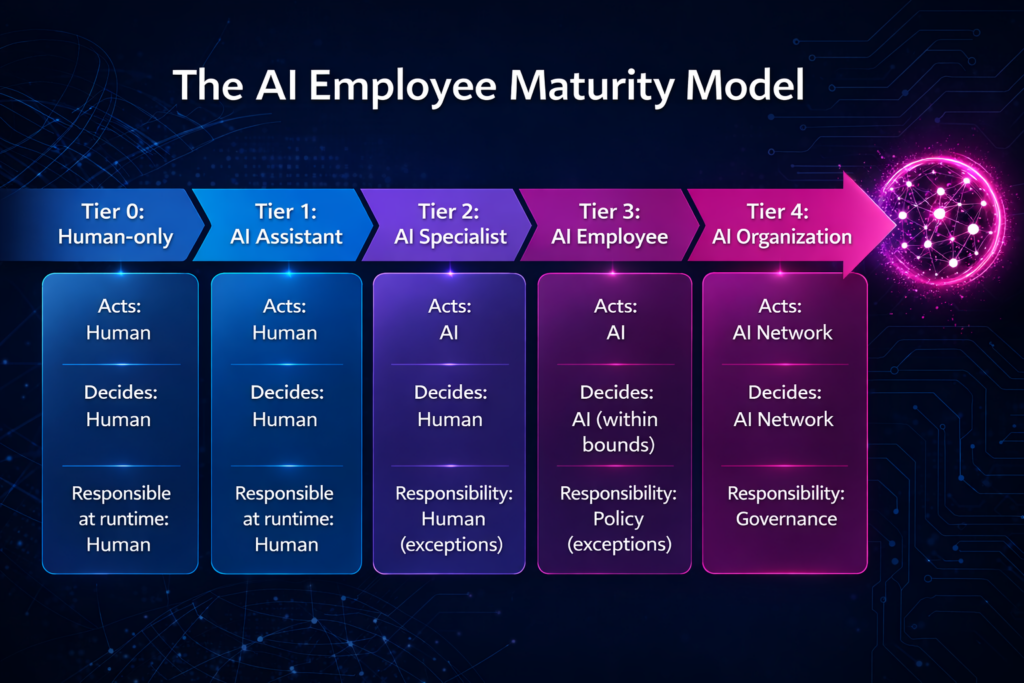

Each tier represents a shift in who acts, who decides, and who is accountable when something happens.

Tier 0: Human-only. AI may exist but it does not act. Humans make all decisions, execute all actions and absorb all ambiguity. Stable, but it scales only through people.

Tier 1: AI Assistant. AI suggests and humans commit. Drafts responses, recommends classifications, surfaces insights. Responsibility remains entirely human even when AI shapes every decision. Most organisations think they are “doing AI” here. They are not wrong. They are early.

Tier 2: AI Specialist. AI owns discrete tasks within clear boundaries. Auto-routing, sending low-risk responses, executing reversible actions. Humans remain responsible but mostly for exceptions. Responsibility begins to move from individuals to rules. This is where most teams stall.

Tier 3: AI Employee. AI runs autonomous workflows under explicit policy. Decision boundaries are defined, escalation paths are designed, stop conditions exist. Humans govern behaviour rather than supervise execution. Responsibility becomes structural.

Tier 4: AI Organisation. Multiple AI agents operate together, coordinating work and acting across systems. Humans no longer review routine decisions. They own policy, acceptable risk and the authority to shut things down. This is what fully automated actually means.

What the model is for

The goal is not to reach Tier 4. Different parts of an organisation will sit at different tiers for a long time, and that is fine. A customer support team might be at Tier 2 while a finance team is still at Tier 1. Neither is wrong. Both need to know which tier they are actually at and whether their structure genuinely supports it.

That second part is what most organisations get wrong. They adopt Tier 2 tooling while their governance is still Tier 1. The technology acts, but the responsibility design has not caught up.

The model is a diagnostic. It asks: what does your system actually own right now, and is the structure around it designed for that level of autonomy?

Used that way, it changes the conversation.

Teams stop arguing about whether AI is ready and start asking: which tier are we at? Does our structure actually support that tier? Where are humans compensating for design that has not been done yet?

A concrete example

Consider a support team moving from Tier 1 to Tier 2. At Tier 1, AI drafts responses and suggests priorities. Humans review and send. Errors get caught informally. Responsibility is obvious.

When the team tries Tier 2, auto-sending low-risk responses, new questions immediately appear. What qualifies as low risk? Who decides that definition? What happens when the AI gets it wrong? How quickly can the automation be paused?

Without the model, a small incident at this stage tends to end the experiment. The response is to turn it off.

With the model, the incident looks different. It is not evidence that autonomy is unsafe. It is evidence that responsibility was not designed clearly enough for Tier 2. The question shifts from “should we turn this off?” to “what is missing in our design?”

That shift, from retreat to diagnosis, is what the model enables.

What has to change at each tier

As organisations move through the tiers, what humans do changes. At lower tiers, the value is in judgement, experience and direct intervention. People are the glue that holds the system together.

At higher tiers, the value shifts. People stop being the glue and become the designers of how the system is allowed to behave. They define policy, design escalation paths, set constraints, monitor for drift and govern risk rather than absorb it.

This does not eliminate human roles. It changes them fundamentally.

For leaders, the shift requires accepting that autonomy is not a technical upgrade. It is an organisational decision. It means designing authority explicitly rather than relying on culture. It means being clear about acceptable risk before incidents force the issue, not after.

There is a practical case for doing this well. Databricks found that organisations using proper AI governance frameworks get twelve times more AI projects into production. Governance is not the brake. It is what makes it safe to accelerate.

The question to ask now

OpenClaw took four months to become the most-starred project in GitHub history. Enterprise-grade versions of what it demonstrates are already being built and deployed. The pace is not slowing.

The right question for leaders is not whether to adopt AI that acts on their behalf. That decision is already being made, often by people further down the organisation.

The right question is whether your structure is designed for what your system is already doing.

What tier are you actually operating at? Does your governance match it? Where are humans quietly compensating for design work that has not been done?

The gap between what AI can do and what organisations have designed for is growing every month. The maturity model does not close that gap. But it tells you exactly where you are in it.

Leave a Reply